Differential and Integral Calculus

| Site: | Aalto OpenLearning |

| Course: | Differential and Integral Calculus 2021 |

| Book: | Differential and Integral Calculus |

| Printed by: | Guest user |

| Date: | Saturday, 7 February 2026, 7:13 AM |

1. Sequences

Basics of sequences

This section contains the most important definitions about sequences. Through these definitions the general notion of sequences will be explained, but then restricted to real number sequences.

Definition: Sequence

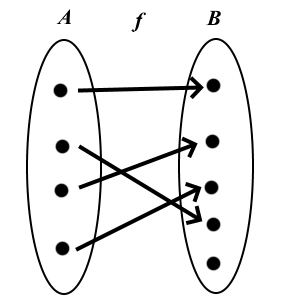

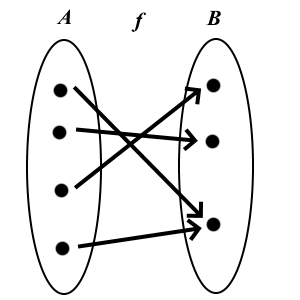

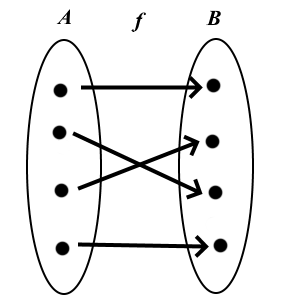

Let \(M\) be a non-empty set. A sequence is a function:

\[f:\mathbb{N}\rightarrow M.\]

Occasionally we speak about a sequence in \(M\).

Note. Characteristics of the set \(\mathbb{N}\) give certain characteristics to the sequence. Because \(\mathbb{N}\) is ordered, the terms of the sequence are ordered.

Definition: Terms and Indices

A sequence can be denoted denoted as

\((a_{1}, a_{2}, a_{3}, \ldots) = (a_{n})_{n\in\mathbb{N}} = (a_{n})_{n=1}^{\infty} = (a_{n})_{n}\)

instead of \(f(n).\) The numbers \(a_{1},a_{2},a_{3},\ldots\in M\) are called the terms of the sequence.

Because of the mapping \[\begin{aligned} f:\mathbb{N} \rightarrow & M \\ n \mapsto & a_{n}\end{aligned}\] we can assign a unique number \(n\in\mathbb{N}\) to each term. We write this number as a subscript and define it as the index; it follows that we can identify any term of the sequence by its index.

| n | 1 | 2 | 3 | 4 | 5 | 6 | 7 | 8 | 9 | \(\ldots\) |

|---|---|---|---|---|---|---|---|---|---|---|

| \(\downarrow\) | \(\downarrow\) | \(\downarrow\) | \(\downarrow\) | \(\downarrow\) | \(\downarrow\) | \(\downarrow\) | \(\downarrow\) | \(\downarrow\) | ||

| \(a_{n}\) | \(a_{1}\) | \(a_{2}\) | \(a_{3}\) | \(a_{4}\) | \(a_{5}\) | \(a_{6}\) | \(a_{7}\) | \(a_{8}\) | \(a_{9}\) | \(\ldots\) |

A few easy examples

Example 1: The sequence of natural numbers

The sequence \((a_{n})_{n}\) defined by \(a_{n}:=n,\,n\in \mathbb{N}\) is called the sequence of natural numbers. Its first few terms are: \[a_1=1,\, a_2=2,\, a_3=3, \ldots\] This special sequence has the property that every term is the same as its index.

![]()

Example 2: The sequence of triangular numbers

Triangular numbers get their name due to the following geometric visualization: Stacking coins to form a triangular shape gives the following diagram:

To the first coin in the first layer we add two coins in a second layer to form the second picture \(a_2\). In turn, adding three coins to \(a_2\) forms \(a_3\). From a mathematical point of view, this sequence is the result of summing natural numbers. To calculate the 10th triangular number we need to add the first 10 natural numbers: \[D_{10} = 1+2+3+\ldots+9+10\] In general form the sequence is defined as: \(D_{n} = 1+2+3+\ldots+(n-1)+n.\)

This motivates the following definition:

Notation and Definition: Sum sequence

Let \((a_n)_n, a_n: \mathbb{N}\to M\) be a sequence with terms \(a_n\), the sum is written: \[a_1 + a_2 + a_3 + \ldots + a_{n-1} + a_n =: \sum_{k=1}^n a_k\] The sign \(\sum\) is called sigma. Here, the index \(k\) increases from 1 to \(n\).

Sum sequences are sequences whose terms are formed by summation of previous terms.

Thus the nth triangular number can be written as: \[D_n = \sum_{k=1}^n k\]

Example 3: Sequence of square numbers

The sequence of square numbers \((q_n)_n\) is defined by: \(q_n=n^2\). The terms of this sequence can also be illustrated by the addition of coins.

Interestingly, the sum of two consecutive triangular numbers is a square number. So, for example, we have: \(3+1=4\) and \(6+3=9\). In general this gives the relationship:

\[q_n=D_n + D_{n-1}\]

Example 4: Sequence of cube numbers

Analogously to the sequence of square number, we give the definition of cube numbers as \[a_n := n^3.\] The first terms of the sequence are: \((1,8,27,64,125,\ldots)\).

Example 5.

Let \((q_n)_n\) with \(q_n := n^2\) be the sequence of square numbers \[\begin{aligned}(1,4,9,16,25,36,49,64,81,100 \ldots)\end{aligned}\] and define the function \(\varphi(n) = 2n\). The composition \((q_{2n})_n\) yields: \[\begin{aligned}(q_{2n})_n &= (q_2,q_4,q_6,q_8,q_{10},\ldots) \\ &= (4,16,36,64,100,\ldots).\end{aligned}\]

Definition: Sequence of differences

Given a sequence \((a_{n})_{n}=a_{1},\, a_{2},\, a_{3},\ldots,\, a_{n},\ldots\); then \[(a_{n+1}-a_{n})_{n}:=a_{2}-a_{1}, a_{3}-a_{2},\dots\] is called the 1st difference sequence of \((a_{n})_{n}\)

The 1st difference sequence of the 1st difference sequence is called the 2nd difference sequence. Analogously the \(n\)th difference< sequence is defined.

Example 6.

Given the sequence \((a_n)_n\) with \(a_n := \frac{n^2+n}{2}\), i.e. \[\begin{aligned}(a_n)_n &= (1,3,6,10,15,21,28,36,\ldots)\end{aligned}\] Let \((b_n)_n\) be its 1st difference sequence. Then it follows that \[\begin{aligned}(b_n)_n &= (a_2-a_1, a_3-a_2, a_4-a_3,\ldots) \\ &= (2,3,4,5,6,7,8,9)\end{aligned}\] A term of \((b_n)_n\) has the general form \[\begin{aligned}b_n &= a_{n+1}-a_{n} \\ &= \frac{(n+1)^2+(n+1)}{2} - \frac{n^2+n)}{2} \\ &= \frac{(n+1)^2+(n+1)-n^2 - n }{2} \\ &= \frac{(n^2+2n+1)+1-n^2}{2} \\ &= \frac{2n+2}{2} \\ &= n + 1.\end{aligned}\]

Some important sequences

There are a number of sequences that can be regarded as the basis of many ideas in mathematics, but also can be used in other areas (e.g. physics, biology, or financial calculations) to model real situations. We will consider three of these sequences: the arithmetic sequence, the geometric sequence, and Fibonacci sequence, i.e. the sequence of Fibonacci numbers.

The arithmetic sequence

There are many definitions of the arithmetic sequence:

Definition A: Arithmetic sequence

A sequence \((a_{n})_{n}\) is called the arithmetic sequence, when the difference \(d \in \mathbb{R}\) between two consecutive terms is constant, thus: \[a_{n+1}-a_{n}=d \text{ with } d=const.\]

Note: The explicit rule of formation follows directly from definition A: \[a_{n}=a_{1}+(n-1)\cdot d\] For the \(n\)th term of an arithmetic sequence we also have the recursive formation rule: \[a_{n+1}=a_n + d.\]

Definition B: Arithmetic sequence

A non-constant sequence \((a_{n})_{n}\) is called an arithmetic sequence (1st order) when its 1st difference sequence is a sequence of constant value.

This rule of formation gives the arithmetic sequence its name: The middle term of any three consecutive terms is the arithmetic mean of the other two, for example:

\[a_2 = \frac{a_1+a_3}{2}.\]

Example 1.

The sequence of natural numbers \[(a_n)_n = (1,2,3,4,5,6,7,8,9,\ldots)\] is an arithmetic sequence, because the difference, \(d\), between two consecutive terms is always given as \(d=1\).

The geometric sequence

The geometric sequence has multiple definitions:

Definition: Geometric sequence

A sequence \((a_{n})_{n}\) is called a geometric sequence when the ratio of any two consecutive terms is always constant \(q\in\mathbb{R}\), thus \[\frac{a_{n+1}}{a_{n}}=q \text{ for all } n\in\mathbb{N}.\]

Note.The recursive relationship \(a_{n+1} = q\cdot a_n \) of the terms of the geometric sequence and the explicit formula for the calculation of the n th term of a geometric sequence \[a_n=a_1\cdot q^{n-1}\] follows directly from the definition.

Again the name and the rule of formation of this sequence are connected: Here, the middle term of three consecutive terms is the geometric mean of the other two, e.g.: \[a_2 = \sqrt{a_1\cdot a_3}.\]

Example 2.

Let \(a\) and \(q\) be fixed positive numbers. The sequence \((a_n)_n\) with \(a_n := aq^{n-1}\), i.e. \[\left( a_1, a_2, a_3, a_4,\ldots \right) = \left( a, aq, aq^2, aq^3,\ldots \right)\] is a geometric sequence. If \(q\geq1\) the sequence is monotonically increasing. If \(q<1\) it is strictly decreasing. The corresponding range \({a,aq,aq^2, aq^3}\) is finite in the case \(q=1\) (namely, a singleton), otherwise it is infinite.

The Fibonacci sequence

The Fibonacci sequence is famous because it plays a role in many biological processes, for instance in plant growth, and is frequently found in nature. The recursive definition is:

Definition: Fibonacci sequence

Let \(a_0 = a_1 = 1\) and let \[a_n := a_{n-2}+a_{n-1}\] for \(n\geq2\). The sequence \((a_n)_n\) is then called the Fibonacci sequence. The terms of the sequence are called the Fibonacci numbers.

The sequence is named after the Italian mathematician Leonardo of Pisa (ca. 1200 AD), also known as Fibonacci (son of Bonacci). He considered the size of a rabbit population and discovered the number sequence: \[(1,1,2,3,5,8,13,21,34,55,\ldots),\]

Example 3.

The structure of sunflower heads can be described by a system of two spirals, which radiate out symmetrically but contra rotating from the centre; there are 55 spirals which run clockwise and 34 which run counter-clockwise.

Pineapples behave very similarly. There we have 21 spirals running in one direction and 34 running in the other. Cauliflower, cacti, and fir cones are also constructed in this manner.

Convergence, divergence and limits

The following chapter deals with the convergence of sequences. We will first introduce the idea of zero sequences. After that we will define the concept of general convergence.

Preliminary remark: Absolute value in \(\mathbb{R}\)

The absolute value function \(x \mapsto |x|\) is fundamental in the study of convergence of real number sequences. Therefore we should summarise again some of the main characteristics of the absolute value function:

Definition: Absolute Value

For any given number \(x\in\mathbb{R}\) its absolute value \(|x|\) is defined by \[\begin{aligned}|x|:=\begin{cases}x & \text{for }x\geq0,\\ -x & \text{for }x<0.\end{cases}\end{aligned}\]

Graph of the absolute value function

Theorem: Calculation Rule for the Absolute Value

For \(x,y\in\mathbb{R}\) the following is always true:

\(|x|\geq0,\)

\(|x|=0\) if and only if \(x=0.\)

\(|x\cdot y|=|x|\cdot|y|\) (Multiplicativity)

\(|x+y|\leq|x|+|y|\) (Triangle Inequality)

Parts 1.-3. Results follow directly from the definition and by dividing it up into separate cases of the different signs of \(x\) and \(y\)

Part 4. Here we divide the triangle inequality into different cases.

Case 1.

First let \(x,y \geq 0\). Then it follows that \[\begin{aligned}|x+y|=x+y=|x|+|y|\end{aligned}\] and the desired inequality is shown.

Case 2.Next let \(x,y < 0\). Then: \[\begin{aligned}|x+y|=-(x+y)=(-x)+ (-y)=|x|+|y|\end{aligned}\]

Case 3.Finally we consider the case \(x\geq 0\) and \(y<0\). Here we have two subcases:

For \(x \geq -y\) we have \(x+y\geq 0\) and thus \(|x+y|=x+y\) from the definition of absolute value. Because \(y<0\) then \(y<-y\) and therefore also \(x+y < x-y\). Overall we have: \[\begin{aligned}|x+y| = x+y < x-y = |x|+|y|\end{aligned}\]

For \(x < -y\) then \(x+y<0\). We have \(|x+y|=-(x+y)=-x-y\). Because \(x\geq0\), we have \(-x < x\) and thus \(-x-y\leq x-y\). Overall we have: \[\begin{aligned}|x+y| = -x-y \leq x-y = |x|+|y|\end{aligned}\]

The case \(x<0\) and \(y\geq0\) we prove it analogously to the case 3, in which \(x\) and \(y\) are exchanged.

\(\square\)

Zero sequences

Definition: Zero sequence

A sequence \((a_{n})_{n}\) s called a zero sequence, if for every \(\varepsilon>0,\) there exists an index \(n_{0}\in\mathbb{N}\) such that \[|a_{n}| < \varepsilon\] for every \(n\geq n_{0},\, n\in\mathbb{N}\). In this case we also say that the sequence converges to zero.

Informally: We have a zero sequence, if the terms of the sequence with high enough indices are arbitrarily close to zero.

Example 1.

The sequence \((a_n)_n\) defined by \(a_{n}:=\frac{1}{n}\), i.e. \[\left(a_{1},a_{2},a_{3},a_{4},\ldots\right):=\left(\frac{1}{1},\frac{1}{2},\frac{1}{3},\frac{1}{4},\ldots\right)\] is called the harmonic sequence. Clearly, it is positive for all \(n\in\mathbb{N}\), however as \(n\) increases the absolute value of each term decreases getting closer and closer to zero.

Take for example \(\varepsilon := \frac{1}{5000}\), then choosing the index \(n_0 = 5000\), it follows that \(a_n<\frac{1}{5000}=\varepsilon\), for all \(n\geq n_0\).

The harmonic sequence converges to zero

Example 2.

Consider the sequence \[(a_n)_n \text{ where } a_n:=\frac{1}{\sqrt{n}}.\] Let \(\varepsilon := \frac{1}{1000}\).We then obtain the index \(n_0=1000000\) in this manner that for all terms \(a_n\) where \(n\geq n_0\) \(a_n < \frac{1}{1000}=\varepsilon\).

Note. To check whether a sequence is a zero sequence, you must choose an (arbitrary) \(\varepsilon \in \mathbb{R}\) where \(\varepsilon > 0\). Then search for a index \(n_0\), after which all terms \(n\) are smaller then said \(\varepsilon\).

Example 3.

We consider the sequence \((a_n)_n\), defined by \[a_n := \left( -1 \right)^n \cdot \frac{1}{n^2}.\]

Because of the factors \((-1)^n\) two consecutive terms have different signs; we call a sequence whose signs change in this way an alternating sequence.

We want to show that this sequence is a zero sequence. According to the definition we have to show that for every \(\varepsilon > 0\) there exist \(n_0 \in \mathbb{N}\), such that we have the inequality: \[|a_n|< \varepsilon\] for every term \(a_n\) where \(n\geq n_0\).

Firstly we let \(\varepsilon > 0\) be an arbitrary constant. Because the inequality \( |a_n|< \varepsilon\) must hold true for an arbitrary \(\varepsilon\) we must find the index \(n_0\) which depends on each \(\varepsilon\). More exactly: The inequality \[|a_{n_0}|=\left| \frac{1}{{n_0}^2} \right|= \frac{1}{{n_0}^2}<\varepsilon\] must be true for the index \(n_0\). Solve for \(n_0\): \[n_0 > \frac{1}{\sqrt{\varepsilon}},\] this index \(n_0\) gives our desired characteristic for every \(\varepsilon\).

Negative examples

The following are examples of non-convergent alternating sequences:

\(a_n = (-1)^n\)

\(a_n = (-1)^n \cdot n\)

Theorem: Characteristics of Zero sequences

Let \((a_n)_n\) and \((b_n)_n\) be two sequences. Then:

Let \((a_n)_n\) be a zero sequence, if \(b_n = a_n\) or \(b_n = -a_n\) for all \(n\in\mathbb{N}\) then \((b_n)_n\) is also a zero sequence.

Let \((a_n)_n\) be a zero sequence, if \(-a_n\leq b_n \leq a_n\) for all \(n\in\mathbb{N}\) then \((b_n)_n\) is also a zero sequence.

Let \((a_n)_n\) be a zero sequence, then \((c\cdot a_n)_n\) where \(c \in \mathbb{R}\) is also a zero sequence.

If \((a_n)_n\) and \((b_n)_n\) are zero sequences, then \((a_n + b_n)_n\) is also a zero sequence.

Parts 1 and 2. If \((a_n)_n\) is a zero sequence, then according to the definition there is an index \(n_0 \in \mathbb{N}\), such that \(|a_n|<\varepsilon\) for every \(n\geq n_0\) and an arbitrary \(\varepsilon\in\mathbb{R}\). But then we have \(|b_n|\leq|a_n|<\varepsilon\); this proves parts 1 and 2 are correct.

Part 3. If \(c=0\), then the result is trivial. Let \(c\neq0\) and choose \(\varepsilon > 0\) such that \[\begin{aligned}|a_n|<\frac{\varepsilon}{|c|}\end{aligned}\] for all \(n\geq n_0\). Rearranging we get: \[\begin{aligned} |c|\cdot|a_n|=|c\cdot a_n|<\varepsilon\end{aligned}\]

Part 4.

Because \((a_n)_n\) is a zero sequence, by the definition we have \(|a_n|<\frac{\varepsilon}{2}\) for all \(n\geq n_0\). Analogously, for the zero sequence \((b_n)_n\) there is a \(m_0 \in \mathbb{N}\) with \(|b_n|<\frac{\varepsilon}{2}\) for all \(n\geq m_0\).

Then for all \(n > \max(n_0,m_0)\) it follows (using the triangle inequality) that: \[\begin{aligned}|a_n + b_n|\leq|a_n|+|b_n|<\frac{\varepsilon}{2}+\frac{\varepsilon}{2} = \varepsilon\end{aligned}\]

\(\square\)

Convergence, divergence

The concept of zero sequences can be expanded to give us the convergence of general sequences:

Definition: Convergence and Divergence

A sequence \((a_{n})_{n}\) is called convergent to \(a\in\mathbb{R}\), if for every \(\varepsilon>0\) there exists a \(n_{0}\) such that: \[|a_{n}-a| \lt \varepsilon \text{ for all }n\in\mathbb{N}_{0},\text{ where }n\geq n_{0}\]

An equivalent definition can be defined by:

A sequence \((a_{n})_{n}\) is called convergent to \(a\in\mathbb{R}\), if \((a_{n}-a)_{n}\) is a zero sequence.

Example 4.

We consider the sequence \((a_n)_n\) where \[a_n=\frac{2n^2+1}{n^2+1}.\] By plugging in large values of \(n\), we can see that for \(n\to\infty\) \(a_n \to 2\) and therefore we can postulate that the limit is \(a=2\).

For a vigorous proof, we show that for every \(\varepsilon > 0\) there exists an index \(n_0\in\mathbb{N}\), such that for every term \(a_n\) with \(n>n_0\) the following relationship holds: \[\left| \frac{2n^2+1}{n^2+1} - 2\right| < \varepsilon.\]

Firstly we estimate the inequality: \[\begin{aligned}\left|\frac{2n^2+1}{n^2+1}-2\right| =&\left|\frac{2n^2+1-2\cdot\left(n^2+1\right)}{n^2+1}\right| \\ =&\left|\frac{2n^2+1-2n^2-2}{n^2+1}\right| \\ =&\left|-\frac{1}{n^2+1}\right| \\ =&\left|\frac{1}{n^2+1}\right| \\ <&\frac{1}{n}.\end{aligned}\]

Now, let \(\varepsilon > 0\) be an arbitrary constant. We then choose the index \(n_0\in\mathbb{N}\), such that \[n_0 > \frac{1}{\varepsilon} \text{, or equivalently, } \frac{1}{n_0} < \varepsilon.\] Finally from the above inequality we have: \[\left|\frac{2n^2+1}{n^2+1}-2\right| < \frac{1}{n} < \frac{1}{n_0} < \varepsilon,\] Thus we have proven the claim and so by definition \(a=2\) is the limit of the sequence.

\(\square\)

If a sequence is convergent, then there is exactly one number which is the limit. This characteristic is called the uniqueness of convergence.

Theorem: Uniqueness of Convergence

Let \((a_{n})_{n}\) be a sequence that converges to \(a\in\mathbb{R}\) and to \(b\in\mathbb{R}\). This implies \(a=b\).

Assume \(a\ne b\); choose \(\varepsilon\in\mathbb{R}\) with \(\varepsilon:=\frac{1}{3}|a-b|.\) Then in particular \([a-\varepsilon,a+\varepsilon]\cap[b-\varepsilon,b+\varepsilon]=\emptyset.\)

Because \((a_{n})_{n}\) converges to \(a\), there is, according to the definition of convergence, a index \(n_{0}\in\mathbb{N}\) with \(|a_{n}-a|< \varepsilon\) for \(n\geq n_{0}.\) Furthermore, because \((a_{n})_{n}\) converges to \(b\) there is also a \(\widetilde{n_{0}}\in\mathbb{N}\) with \(|a_{n}-b|< \varepsilon\) for \(n\geq\widetilde{n_{0}}.\) For \(n\geq\max\{n_{0},\widetilde{n_{0}}\}\) we have:

\[\begin{aligned}\varepsilon\ = &\ \frac{1}{3}|a-b| \Rightarrow\\

3\varepsilon\ = &\ |a-b|\\

= &\ |(a-a_{n})+(a_{n}-b)|\\

\leq &\ |a_{n}-a|+|a_{b}-b|\\

< &\ \varepsilon+\varepsilon=2\varepsilon,\end{aligned}\]

Consequently we have obtained \(3\varepsilon\leq2\varepsilon\), which is a contradiction as \(\varepsilon>0\). Therefore the assumption must be wrong, so \(a=b\).

\(\square\)

Definition: Divergent, Limit

If provided that a sequence \((a_{n})_{n}\) and an \(a\in\mathbb{R}\) exist, to which the sequence converges, then the sequence is called convergent and \(a\) is called the limit of the sequence, otherwise it is called divergent.

Notation. \((a_{n})_{n}\) is convergent to \(a\) is also written: \[a_{n}\rightarrow a,\text{ or }\lim_{n\rightarrow\infty}a_{n}=a.\] Such notation is allowed, as the limit of a sequence is always unique by the above Theorem (provided it exists).

Theorem: Bounded Sequences

A convergent sequence \((a_n)_n\) is bounded i.e. there exists a constant \(r\in\mathbb{R}\) such that: \[|a_n| \lt r\] for all \(n\in\mathbb{N}\).

We assume that the sequence \((a_n)_n\) has the limit \(a\). By the definition of convergence, we have that \(|a_n - a|<\varepsilon\) for all \(\varepsilon \in \mathbb{R}\) and \(n\geq n_0\). Choosing \(\varepsilon = 1\) gives:

\[\begin{aligned}|a_n|-|a|&\ \leq |a_n -a| \\

&\ < 1,\end{aligned}\]

And therefore also \(|a_n|\leq |a|+1\).

Thus for all \(n\in \mathbb{N}\): \[|a_n|\leq \max \left\{ |a_1|,|a_2|,\ldots,|a_{n_0}|,|a|+1 \right\}=:r\]

\(\square\)

Rules for convergent sequences

Theorem: Subsequences

Let \((a_{n})_{n}\) be a sequence such that \(a_{n}\rightarrow a\) and let \((a_{\varphi(n)})_{n}\) be a subsequence of \((a_{n})_{n}\). Then it follows that \((a_{\varphi(n)})_{n}\rightarrow a\).

Informally: If a sequence is convergent then all of its subsequences are also convergent and in fact converge to the same limit as the original.

By the definition of a subsequence \(\varphi(n)\geq n\). Because \(a_{n}\rightarrow a\) it is implicated that \(|a_{n}-a|<\varepsilon\) for \(n\geq n_{0}\), therefore \(|a_{\varphi(n)}-a|<\varepsilon\) for these indices \(n\).

\(\square\)

Theorem: Rules

Let \((a_{n})_{n}\) and \((b_{n})_{n}\) be sequences with \(a_{n}\rightarrow a\) and \(b_{n}\rightarrow b\). Then for \(\lambda, \mu \in \mathbb{R}\) it follows that:

\(\lambda \cdot (a_n)+\mu \cdot (b_n) \to \lambda \cdot a + \mu \cdot b\)

\((a_n)\cdot (b_n) \to a\cdot b\)

Informally: Sums, differences and products of convergent sequences are convergent.

Part 1. Let \(\varepsilon > 0\). We must show, that for all \(n \geq n_0\) it follows that: \[|\lambda \cdot a_n + \mu \cdot b_n - \lambda \cdot a - \mu \cdot b| < \varepsilon.\] The left hand side we estimate using: \[|\lambda (a_n-a)+\mu (b_n - b)| \leq |\lambda|\cdot|a_n-a|+|\mu|\cdot|b_n-b|.\]

Because \((a_n)_n\) and \((b_n)_n\) converge, for each given \(\varepsilon > 0\) it holds true that: \[\begin{aligned}|a_n - a| <\ \varepsilon_1 := &\ \textstyle \frac{\varepsilon}{2|\lambda|} \text{ for all }n\geq n_0\\ |b_n - b| <\ \varepsilon_2 := &\ \textstyle \frac{\varepsilon}{2|\mu|} \text{ for all }n\geq n_1\end{aligned}\]

Therefore \[\begin{aligned}|\lambda|\cdot|a_n-a|+|\mu|\cdot|b_n-b| < &\ |\lambda|\varepsilon_1 + |\mu|\varepsilon_2 \\ = &\ \textstyle{ \frac{\varepsilon}{2} + \frac{\varepsilon}{2} } = \varepsilon\end{aligned}\] for all numbers \(n \geq \max \{n_0,n_1\}\). Therefore the sequence \[\left( \lambda \left( a_n - a \right) + \mu \left( b_n - b \right) \right)_n\] is a zero sequence and the desired inequality is shown.

Part 2. Let \(\varepsilon > 0\). We have to show, that for all \(n > n_0\) \[|a_n b_n - a b| < \varepsilon.\] Furthermore an estimation of the left hand side follows: \[\begin{aligned} |a_n b_n - a b| =&\ |a_n b_n - a b_n + a b_n - ab| \\ \leq &\ |b_n|\cdot|a_n-a| + |a|\cdot|b_n - b|.\end{aligned}\] We choose a number \(B\), such that \(|b_n| \lt b\) for all \(n\) and \(|a| \lt b\). Such a value of \(B\) exists by the Theorem of convergent sequences being bounded. We can then use the estimation: \[\begin{aligned}|b_n|\cdot|a_n-a| + |a|\cdot|b_n - b| <&\ B \cdot \left(|a_n - a| + |b_n - b| \right).\end{aligned}\] For all \(n>n_0\) we have \(|a_n - a|<\frac{\varepsilon}{2\cdot B}\) and \(|b_n - b|<\frac{\varepsilon}{2\cdot B}\), and - putting everything together - the desired inequality it shown.

\(\square\)

2. Series

Table of Content

Convergence

Convergence

If the sequence of partial sums \((s_n)\) has a limit \(s\in \mathbb{R}\), then the series of the sequence \((a_k)\) converges and its sum is \(s\). This is denoted by \[ a_1+a_2+\dots =\sum_{k=1}^{\infty} a_k = \lim_{n\to\infty}\underbrace{\sum_{k=1}^{n} a_k}_{=s_{n}} = s. \]

Indexing

The partial sums should be indexed in the same way as the sequence \((a_k)\); e.g. the partial sums of a sequence \((a_k)_{k=0}^{\infty}\) are \(s_0= a_0, s_1=a_0+a_1\) etc.

The indexing of a series can be shifted without altering the series: \[\sum_{k=1}^{\infty} a_k =\sum_{k=0}^{\infty} a_{k+1} = \sum_{k=2}^{\infty} a_{k-1}.\]

In a concrete way: \[\sum_{k=1}^{\infty} \frac{1}{k^2}=1+\frac{1}{4}+\frac{1}{9}+\dots= \sum_{k=0}^{\infty} \frac{1}{(k+1)^2}\]

Interactivity.

Compute partial sums of the series \(\displaystyle\sum_{k=0}^{\infty}a_{k}\)

\(k\)th-element of the series: , start summation at

Divergence of a series

A series that does ot converge is divergent. This can happen in three different ways:

- the partial sums tend to infinity

- the partial sums tend to minus infinity

- the sequence of partial sums oscillates so that there is no limit.

In the case of a divergent series the symbol \(\displaystyle\sum_{k=1}^{\infty} a_k\) does not really mean anything (it isn't a number). We can then interpret it as the sequence of partial sums, which is always well-defined.

Basic results

Geometric series

A geometric series \[\sum_{k=0}^{\infty} aq^k\] converges if \(|q|<1\) (or \(a=0\)), and then its sum is \(\frac{a}{1-q}\). If \(|q|\ge 1\), then the series diverges.

Proof. The partial sums satisfy

\[\sum_{k=0}^{n} aq^k =\frac{a(1-q^{n+1})}{1-q},\]

from which the claim follows.

\(\square\)

More generally \[\sum_{k=i}^{\infty} aq^k = \frac{aq^i}{1-q} = \frac{\text{1st term of the series}}{1-q},\text{ for } |q|<1.\]

Example 1.

Calculate the sum of the series \[\sum_{k=1}^{\infty}\frac{3}{4^{k+1}}.\]

Solution. Since \[\frac{3}{4^{k+1}} = \frac{3}{4}\cdot \left( \frac{1}{4}\right)^k,\] this is a geometric series. The sum is \[\frac{3}{4}\cdot \frac{1/4}{1-1/4} = \frac{1}{4}.\]

Rules of summation

Properties of convergent series:

- \(\displaystyle{\sum_{k=1}^{\infty} (a_k+b_k) = \sum_{k=1}^{\infty} a_k + \sum_{k=1}^{\infty} b_k}\)

- \(\displaystyle{\sum_{k=1}^{\infty} (c\, a_k) = c\sum_{k=1}^{\infty} a_k}\), where \(c\in \mathbb{R}\) is a constant

Proof. These follow from the corresponding properties for limits of a sequence.

\(\square\)

Note: Compared to limits, there is no similar product-rule for series, because even for sums of two elements we have \[(a_1+a_2)(b_1+b_2) \neq a_1b_1 +a_2b_2.\] The correct generalization is the Cauchy product of two series, where also the cross terms are taken into account.

Theorem 1.

If the series \(\displaystyle{\sum_{k=1}^{\infty} a_k}\) converges, then \[\displaystyle{\lim_{k\to \infty} a_k =0}.\]

Conversely: If \[\displaystyle{\lim_{k\to \infty} a_k \neq 0},\] then the series \(\displaystyle{\sum_{k=1}^{\infty} a_k}\) diverges.

If the sum of the series is \(s\), then \(a_k=s_k-s_{k-1}\to s-s=0\).

\(\square\)

Note: The property \(\lim_{k\to \infty} a_k = 0\) cannot be used to justify the convergence of a series; cf. the following examples. This is one of the most common elementary mistakes many people do when studying series!

Example

Explore the convergence of the series \[\sum_{k=1}^{\infty} \frac{k}{k+1} = \frac{1}{2}+\frac{2}{3}+\frac{3}{4}+\dots\]

Solution. The limit of the general term of the series is \[\lim_{k\to\infty}\frac{k}{k+1} = 1.\] As this is different from zero, the series diverges.

Harmonic series

The harmonic series \[\sum_{k=1}^{\infty} \frac{1}{k} = 1+\frac{1}{2}+\frac{1}{3}+\dots\] diverges, although the limit of the general term \(a_k=1/k\) equals zero.

This is a classical result first proven in the 14th century by Nicole Oresme after which a number of proofs using different approaches have been published. Here we present two different approaches for comparison.

i) An elementary proof by contradiction. Suppose, for the sake of contradiction, that the harmonic series converges i.e. there exists \(s\in\mathbb{R}\)

such that \(s = \sum_{k=1}^{\infty}1/k\). In this case

\[

s = \left(\color{#4334eb}{1} + \color{#eb7134}{\frac{1}{2}}\right) + \left(\color{#4334eb}{\frac{1}{3}} +

\color{#eb7134}{\frac{1}{4}}\right) + \left(\color{#4334eb}{\frac{1}{5}} + \color{#eb7134}{\frac{1}{6}}\right) + \dots

= \sum_{k=1}^{\infty}\left(\color{#4334eb}{\frac{1}{2k-1}} + \color{#eb7134}{\frac{1}{2k}}\right).

\]

Now, by direct comparison we get

\[

\color{#4334eb}{\frac{1}{2k-1}} > \color{#eb7134}{\frac{1}{2k}} > 0, \text{ for all }k\ge 1~\Rightarrow~\sum_{k=1}^{\infty}\color{#4334eb}{\frac{1}{2k-1}} > \sum_{k=1}^{\infty}\color{#eb7134}{\frac{1}{2k}} = \frac{s}{2}

\]

hence following from the Properties of summation it follows that

\[

s = \sum_{k=1}^{\infty}\color{#4334eb}{\frac{1}{2k-1}} + \sum_{k=1}^{\infty}\color{#eb7134}{\frac{1}{2k}} = \sum_{k=1}^{\infty}\color{#4334eb}{\frac{1}{2k-1}} + \frac{1}{2}\underbrace{\sum_{k=1}^{\infty}\frac{1}{k}}_{=s}.

\]

\[

= \sum_{k=1}^{\infty}\color{#4334eb}{\frac{1}{2k-1}} + \frac{s}{2} > \sum_{k=1}^{\infty}\color{#eb7134}{\frac{1}{2k}} + \frac{s}{2} = \frac{s}{2} + \frac{s}{2} = s.

\]

But this implies that \(s>s\), a contradiction. Therefore, the initial assumption that the harmonic series converges must be false and thus the series diverges.

\(\square\)

ii) Proof using integral: Below a histogram with heights \(1/k\) lies the graph of

the function \(f(x)=1/(x+1)\), so comparing areas we have

\[\sum_{k=1}^{n} \frac{1}{k} \ge \int_0^n\frac{dx}{x+1} =\ln(n+1)\to\infty, \]

as \(n\to\infty\).

\(\square\)

Positive series

Summing a series is often difficult or even impossible in closed form, sometimes only a numerical approximation can be calculated. The first goal then is to find out whether a series is convergent or divergent.

A series \(\displaystyle{\sum_{k=1}^{\infty} p_k}\) is positive, if \(p_k > 0\) for all \(k\).

Convergence of positive series is quite straightforward:

Theorem 2.

A positive series converges if and only if the sequence of partial sums is bounded from above.

Why? Because the partial sums form an increasing sequence.

Example

Show that the partial sums of a superharmonic series \[\sum_{k=1}^{\infty}\frac{1}{k^2}\] satisfy \(s_n<2\) for all \(n\), so the series converges.

Solution. This is based on the formula \[\frac{1}{k^2} < \frac{1}{k(k-1)} = \frac{1}{k-1}-\frac{1}{k},\] for \(k\ge 2\), as it implies that \[\sum_{k=1}^n\frac{1}{k^2} < 1+ \sum_{k=2}^n\frac{1}{k(k-1)} =2-\frac{1}{n}< 2\] for all \(n\ge 2\).

This can also be proven with integrals.

Leonhard Euler found out in 1735 that the sum is actually \(\pi^2/6\). His proof was based on comparison of the series and product expansion of the sine function.

Absolute convergence

Definition

A series \(\displaystyle{\sum_{k=1}^{\infty} a_k}\) converges absolutely if the positive series \(\sum_{k=1}^{\infty} |a_k|\) converges.

Theorem 3.

An absolutely convergent series converges (in the usual sense) and \[\left| \sum_{k=1}^{\infty} a_k \right| \le \sum_{k=1}^{\infty} |a_k|.\]

This is a special case of the Comparison principle, see later.

Suppose that \(\sum_k |a_k|\) converges. We study separately the positive and negative

parts of \(\sum_k a_k\):

Let \[b_k=\max (a_k,0)\ge 0 \text{ and } c_k=-\min (a_k,0)\ge 0.\]

Since \(b_k,c_k\le |a_k|\), the positive series \(\sum b_k\) and \(\sum c_k\) converge by Theorem 2.

Also, \(a_k=b_k-c_k\), so \(\sum a_k\) converges as a difference of two convergent series.

\(\square\)

Example

Study the convergence of the alternating (= the signs alternate) series \[\sum_{k=1}^{\infty}\frac{(-1)^{k+1}}{k^2}=1-\frac{1}{4}+\frac{1}{9}-\dots\]

Solution. Since \[\displaystyle{\left| \frac{(-1)^{k+1}}{k^2}\right| =\frac{1}{k^2}}\] and the superharmonic series \[\sum_{k=1}^{\infty}\frac{1}{k^2}\] converges, then the original series is absolutely convergent. Therefore it also converges in the usual sense.

Alternating harmonic series

The usual convergence and absolute convergence are, however, different concepts:

Example

The alternating harmonic series \[\sum_{k=1}^{\infty}\frac{(-1)^{k+1}}{k} = 1-\frac{1}{2}+\frac{1}{3}-\frac{1}{4}+\dots\] converges, but not absolutely.

(Idea) Draw a graph of the partial sums \((s_n)\) to get the idea that even and odd index partial sums \(s_{2n}\) and \(s_{2n+1}\) are monotone and converge to the same limit.

The sum of this series is \(\ln 2\), which can be derived by integrating the formula of a geometric series.

points are joined by line segments for visualization purposes

Convergence tests

Comparison test

The preceeding results generalize to the following:

Theorem 4.

(Majorant) If \(|a_k|\le p_k\) for all \(k\) and

\(\sum_{k=1}^{\infty} p_k\) converges,

then also \(\sum_{k=1}^{\infty} a_k\) converges.

(Minorant) If \(0\le p_k \le a_k\) for all \(k\) and \(\sum p_k\) diverges, then also \(\sum a_k\) diverges.

Proof for Majorant. Since \[a_k=|a_k|-(|a_k|-a_k)\] and

\[0\le |a_k|-a_k \le 2|a_k|,\]

then \(\sum a_k\) is convergent as a difference of two convergent positive series.

Here we use the elementary convergence property (Theorem 2.) for positive series;

this is not a circular reasoning!

Proof for Minorant. It follows from the assumptions that the partial sums of \(\sum a_k\)

tend to infinity, and the series is divergent.

\(\square\)

Example

Study the convergence of \[ \sum_{k=1}^{\infty} \frac{1}{1+k^3} \ \text{ ja }\ \sum_{k=1}^{\infty} \frac{1}{\sqrt{k}}. \]

Solution. Since \[0<\frac{1}{1+k^3} < \frac{1}{k^3}\le \frac{1}{k^2}\] for all \(k\in \mathbb{N}\), the first series is convergent by the majorant principle.

On the other hand, \[\displaystyle{\frac{1}{\sqrt{k}}\ge \frac{1}{k}}\] for all \(k\in\mathbb{N}\), so the second series has a divergent harmonic series as a minorant. The latter series is thus divergent.

Ratio test

In practice, one of the best ways to study convergence/divergence of a series is the so-called ratio test, where the terms of the sequence are compared to a suitable geometric series:

Theorem 5a.

Suppose that there is a constant \(0< Q < 1\) so that \[ \left| \frac{a_{k+1}}{a_k} \right| \le Q\] starting from some index \(k\ge k_0\).

Then the series \(\sum a_k\) converges (and the rate of convergence is comparable to the geometric series \(\sum Q^k\), or is even higher).

We may assume that the inequality is valid for all indices \(k\), because the initial part has no effect on the convergence (although it has an effect to the sum!).

This now implies that

\[|a_{k}|\le Q|a_{k-1}|\le Q^2|a_{k-2}|\le \dots\le Q^k|a_0|,\]

so the series has a convergent geometric majorant.

\(\square\)

Limit form of ratio test

Theorem 5b.

Suppose that the limit \[\lim_{k\to \infty} \left| \frac{a_{k+1}}{a_k} \right| = q\] exists. Then the series \(\sum a_k\) \[ \begin{cases}\text{converges} & \text{ if } 0\le q< 1,\\ \text{diverges} & \text{ if } q > 1,\\ \text{nay be convergent or divergent} & \text{ if } q=1. \end{cases} \]

(Idea) For a geometric series the ratio of two consecutive terms is exactly \(q\). According to the ratio test, the convergence of some other series can also be investigated in a similar way, when the exact ratio \(q\) is replaced by the above limit.

In the formal definition of a limit \(\varepsilon =(1-q)/2>0\). Thus starting from some index \(k\ge k_{\varepsilon}\) we have \[ |a_{k+1}/a_k| < q + \varepsilon = (q+1)/2 = Q < 1, \] and the claim follows from Theorem 4.

In the case \(q>1\) the general term of the series does not go to zero, so the series diverges.

The last case \(q=1\) does not give any information.

This case occurs for the harmonic series (\(a_k=1/k\), divergent!) and superharmonic

(\(a_k=1/k^2\), convergent!) series. In these cases the convergence or divergence

must be settled in some other way, as we did before.

\(\square\)

Example

Is the series \[\sum_{k=1}^{\infty}\frac{(-1)^{k+1}k}{2^k}= \frac{1}{2}-\frac{2}{4}+\frac{3}{8}-\dots\] convergent?

Solution. Here \(a_k=(-1)^{k+1}k/2^k\), so \[ \left| \frac{a_{k+1}}{a_k}\right| = \left| \frac{(-1)^{k+2}(k+1)/2^{k+1}}{(-1)^{k+1}k/2^k}\right| =\frac{k+1}{2k} =\frac{1}{2}+\frac{1}{2k}\to \frac{1}{2} < 1, \] when \(k\to\infty\). By the ratio test the series is convergent.

3. Continuity

Table of Content

In this section we define a limit of a function \(f\colon S\to \mathbb{R}\) at a point \(x_0\). It is assumed that the reader is already familiar with limit of a sequence, the real line and the general concept of a function of one real variable.

Limit of a function

For a subset of real numbers, denoted by \(S\), assume that \(x_0\) is such point that there is a sequence of points \((x_k)\in S\) such that \(x_k\to x_0\) as \(k\to \infty\). Here the set \(S\) is often the set of all real numbers, but sometimes an interval (open or closed).

Example 1.

Note that it is not necessary for \(x_0\) to be in \(S\). For example, the sequence \(x_k = 1/k\to 0\) as \(k\to \infty\) in \(S=]0,2[\), and \(x_k\in S\) for all \(k=1,2,\ldots\) but \(0\) is not in \(S\).

Limit of a function

We consider a function \(f\) defined in the set \(S\). Then we define the limit of the function \(f\colon S\to \mathbb{R}\) at \(x_0\) as follows.

Definition 1: Limit of a function

Suppose that \(S\subset \mathbb{R}\) and \(f\colon S\to \mathbb{R}\) is a function. Then we say that \(f\) has a limit \(y_{0}\) at \(x_{0}\), and write \[\lim_{x \to x_{0}}f(x)=y_{0},\] if, \(f(x_{k})\to y_{0}\) as \(k\to \infty\) for every sequence \((x_{k})\) in \(S\setminus\{x_0\}\), such that \(x_{k}\to x_{0}\) as \(k\to \infty\).

Example 2.

The function \(f\colon \mathbb{R} \to \mathbb{R}\) defined by \(f(x)=x^2\) has a limit \(0\) at the point \(x=0\).

Function \(y=x^2\).

Example 3.

The function \(g\colon\mathbb{R}\to \mathbb{R}\) defined by \[g(x)= \left\{\begin{array}{rl}0 & \text{ for }x<0, \\ 1 & \text{ for }x\ge 0.\end{array}\right.\] does not have a limit at the point \(x=0\). To formally prove this, take sequences \((x_k)\), \((y_k)\) defined by \(x_k=1/k\) and \(y_k=-1/k\) for \(k=1,2,\ldots\). Then the both sequences are in \(S=\mathbb{R}\), but \(f(x_k)=1\) and \(f(y_k)=0\) for any \(k\).

Function \[g(x)= \left\{\begin{array}{rl}0 & \text{ for }x<0, \\ 1 & \text{ for }x\ge 0.\end{array}\right.\]

Example 4.

The function \(f(x)=x \sin(1/x)\), \(x>0\) does have the limit \(0\) at \(0\).

Function \(y=x\sin(1/x)\) for \(x>0\).

Example 5.

The function \(g(x)= \sin(1/x)\), \(x>0\) does not have a limit at \(0\).

Function \(y=\sin(1/x)\) for \(x>0\).

One-sided limits

An important property of limits is that they are always unique. That is, if \(\lim_{x\to x_0} f(x)=a\) and \(\lim_{x\to x_0} f(x)=b\), then \(a=b\). Although a function may have only one limit at a given point, it is sometimes useful to study the behavior of the function when \(x_k\) approaches the point \(x_0\) from the left or the right side. These limits are called the left and the right limit of the function \(f\) at \(x_0\), respectively.

Definition 2: One-sided limits

Suppose \(S\) is a set in \(\mathbb{R}\) and \(f\) is a function defined on the set \(S\setminus\{x_0\}\). Then we say that \(f\) has a left limit \(y_{0}\) at \(x_{0}\), and write \[\lim_{x \to x_{0}-}f(x)=y_{0},\] if, \(f(x_{k})\to y_{0}\) as \(k\to \infty\) for every sequence \((x_{k})\) in the set \(S\cap ]-\infty,x_0[ =\{ x\in S : x < x_0 \}\), such that \(x_{k}\to x_{0}\) as \(k\to \infty\).

Similarly, we say that \(f\) has a right limit \(y_{0}\) at \(x_{0}\), and write \[\lim_{x \to x_{0}+}f(x)=y_{0},\] if, \(f(x_{k})\to y_{0}\) as \(k\to \infty\) for every sequence \((x_{k})\) in the set \(S\cap ]x_0,\infty[ =\{ x\in S : x_0 < x \}\), such that \(x_{k}\to x_{0}\) as \(k\to \infty\).

Theorem 1: Limit of a function

A function \(f\colon S\to \mathbb{R}\) has a limit \(y_0\) at the point \(x_0\) if and only if \[\lim_{x \to x_{0}-}f(x)= \lim_{x \to x_{0}+}f(x)=y_{0}.\]

Example 6.

The sign function \[\mathrm{sgn}(x)= \frac{x}{|x|}\] is defined on \(S= \mathbb{R}\setminus 0\). Its left and right limits at \(0\) are \[\lim_{x\to 0-} \mathrm{sgn}(x)= -1,\qquad \lim_{x\to 0+} \mathrm{sgn}(x)= 1.\] However, the function \(\mathrm{sgn}(x)\) does not have a limit at \(0\).

Function \(y = \frac{x}{|x|}\).

Example 7.

Function \(f: \mathbb{R}\setminus 0 \to \mathbb{R}\) \[f(x) = \frac{1}{x}\] does not have one-sided limits at 0.

Limit rules

The following limit rules are immediately obtained from the definition and basic algebra of real numbers.

Theorem 2: Limit rules

Let \(c\in \mathbb{R}, \lim_{x\to x_{0}} f(x)=a\) and \(\lim_{x\to x_{0}} g(x)=b.\) Then

- \(\lim_{x\to x_{0}} (cf)(x)=ca\),

- \(\lim_{x\to x_{0}} (f+g)(x)=a+b\),

- \(\lim_{x\to x_{0}} (fg)(x)=ab\),

- \(\lim_{x\to x_{0}} (f/g)(x)=a/b \ (\text{if} \ b \neq 0)\).

Example 8.

Finding limits by calculating \(f(x_0)\):

a) \[\lim_{x\to 2}(5x-3)=10-3=7.\]

b) \[\lim_{x\to -2}\frac{3x+2}{x+5} = \frac{-6+2}{-2+5}=-\frac{4}{3}.\]

c) \[\lim_{x\to 2} \frac{x^2-4}{x-2} = \lim_{x\to 2} \frac{(x+2)(x-2)}{x-2} = \lim_{x\to 2}(x+2) = 4.\]

Limits and continuity

In this section, we define continuity of the function. The intutive idea behind continuity is that the graph of a continuous function is a connected curve. However, this is not sufficient as a mathematical definition for several reasons. For example, by using this definition, one cannot easily decide if \(\tan(x)\) is a continuous function or not.

For continuity of a function \(f\) at a given point \(x_0\), it is required that:

\(f(x_0)\) is defined,

\(\lim_{x \to x_0} f(x)\) exists (and is finite),

\(\lim_{x \to x_0} f(x) = f(x_0)\).

In other words:

Definition 2: Continuity

A function \(f\colon S\to \mathbb{R}\) is continuous at a point \(x_{0}\in S\), if \[\lim_{x\to x_{0}}f(x)=f(x_{0}).\] A function \(f\colon S\to \mathbb{R}\) is continuous, if it is continuous at every point \(x_{0}\in S\).

Example 1.

Let \(c\in \mathbb{R}\). Functions \(f,g,h\) defined by \(f(x)=c\), \(g(x)=x\), \(h(x)=|x|\) are continuous at every point \(x\in \mathbb{R}\).

Why? If \(x_{k}\to x_{0}\), then \(f(x_{k})=c\) and \(\lim_{k\to \infty}f(x_k)= c=f(x_{0})\). For \(g\), we have \(g(x_{k})=x_{k}\) and hence, \(\lim_{k\to\infty} g(x_k)=x_{0}=g(x_{0})\). Similarly, \(h(x_{k})=|x_{k}|\) and \(\lim_{k\to\infty}h(x_k)= |x_{0}|=h(x_{0})\).

Continuous functions \(y=c\), \(y=x\) and \(y=|x|\).

Example 2.

Let \(x_{0}\in \mathbb{R}\). We define a function \(f\colon\mathbb{R}\to \mathbb{R}\) by \[f(x)= \left\{\begin{array}{rl}2 & \text{ for }x \lt x_{0}, \\ 3 & \text{ for }x\geq x_{0}.\end{array}\right.\] Then \[\lim_{x \to x_{0}^{-}}f(x)=2,\text{ and } \lim_{x \to x_{0}^{+}}f(x)=3.\] Therefore \(f\) is not continuous at the point \(x_{0}\).

Some basic properties of continuous functions of one real variable are given next. From the limit rules (Theorem 2) we obtain:

Theorem 3.

The sum, the product and the difference of continuous functions are continuous. Then, in particular, polynomials are continuous functions. If \(f\) and \(g\) are polynomials and \(g(x_{0})\neq 0\), then \(f/g\) is continuous at a point \(x_{0}\).

A composition of continuous functions is continuous if it is defined:

Theorem 4.

Let \(f\colon \mathbb{R}\to\mathbb{R}\) and \(g\colon \mathbb{R}\to \mathbb{R}\). Suppose that \(f\) is continuous at a point \(x_{0}\) and \(g\) is continuous at \(f(x_{0})\). Then \(g\circ f\colon \mathbb{R}\to \mathbb{R}\) is continuous at a point \(x_{0}\).

Note. If \(f\) is continuous, then \(|f|\) is continuous.

Why?

Write \(g(x):=|x|\). Then \((g\circ f)(x)=|f(x)|\).

Note. If \(f\) and \(g\) are continuous, then \(\max (f,g)\) and \(\min (f,g)\) are continuous. (Here \(\max (f,g)(x):=\max \{f(x),g(x)\}\).)

Why?

Write \[\begin{cases}(a+b)+|a-b|=2\max(a,b), \\ (a+b)-|a-b|=2\min(a,b). \end{cases} \]

\[\text{Function }f(x)= \left\{\begin{array}{rl}2 & \text{ for }x\lt x_{0}, \\ 3 & \text{ for }x\geq x_{0}. \end{array}\right.\]

Delta-epsilon definition

The so-called \((\varepsilon,\delta)\)-definition for continuity is given next. The basic idea behind this test is that, for a function \(f\) continuous at \(x_0\), the values of \(f(x)\) should get closer to \(f(x_0)\) as \(x\) gets closer to \(x_0\).

This is the standard definition of continuity in mathematics, because it also works for more general classes of functions than ones on this course, but it not used in high-school mathematics. This important definition will be studied in-depth in Analysis 1 / Mathematics 1.

\((\varepsilon,\delta)\)-test:

Theorem 5: \((\varepsilon,\delta)\)-definition

Let \(f: S\to \mathbb{R}\). Then the following conditions are equivalent:

- \(\lim_{x\to x_0} f(x)= y_0\),

- For all \(\varepsilon> 0\) there exists \(\delta >0\) such that if \(0 < |x-x_0| < \delta\), then \(|f(x) - y_0| <\varepsilon\) for all \(x\in S\).

Example 3.

From Theorem 3 we already know that the function \(f: \mathbb{R} \to \mathbb{R}\) defined by \(f(x) = 4x\) is continuous. We can also use the \((\varepsilon,\delta)\)-definition to prove this.

Proof. Let \(x_0 \in \mathbb{R}\) and \(\varepsilon > 0\). Now \[|f(x) - f(x_0)| = |4x - 4x_0| = 4|x - x_0| < \varepsilon,\] when \[|x - x_0| < \delta \text{, where } \delta = \frac{\varepsilon}{4}.\]

So for all \(\varepsilon > 0\) there exists \(\delta > 0\) such that if \(|x - x_0| < \delta\), then \(|f(x) - f(x_0)| < \varepsilon\) for all \(x \in \mathbb{R}\). Thus by

Theorem 5 \(\lim_{x \to x_0} f(x) = f(x_0)\) for all \(x_0 \in \mathbb{R}\) and by definition this means that the function \(f: \mathbb{R} \to \mathbb{R}\) is continuous.

\(\square\)

Interactivity. \((\varepsilon, \delta)\) in example 3.

Example 4.

Let \(x_{0}\in \mathbb{R}\). We define a function \(f\colon\mathbb{R}\to \mathbb{R}\) by \[f(x)= \left\{\begin{array}{rl}2 & \text{ for }x \lt x_{0}, \\ 3 & \text{ for }x \geq x_{0}.\end{array}\right.\] In Example 2 we saw that this function is not continuous at the point \(x_0\). To prove this using the \((\varepsilon,\delta)\)-test, we need to find some \(\varepsilon > 0\) and some \(x_\delta \in \mathbb{R}\) such that for all \(\delta > 0\), \(|x_\delta - x_0| < \delta\), but \(|f(x_\delta) - f(x_0)| > \varepsilon\).

Proof. Let \(\delta > 0\) and \(\varepsilon = 1/2\). By choosing \(x_\delta = x_0 - \delta /2\), we have

\[0 < |x_\delta-x_0| = |x_0 - \frac{\delta}{2} + x_0| = \frac{\delta}{2} < \delta,\]

and

\[|f(x_\delta) - f(x_0)| = |2 - 3| = 1 > \varepsilon.\]

Therefore by Theorem 5 \(f\) is not continuous at the point \(x_{0}\).

\(\square\)

Interactivity. \((\varepsilon, \delta)\) in example 4.

Properties of continuous functions

This section contains some fundamental properties of continuous functions. We start with the Intermediate Value Theorem for continuous functions, also known as Bolzano's Theorem. This theorem states that a function that is continuous on a given (closed) real interval, attains all values between its values at endpoints of the interval. Intuitively, this follows from the fact that the graph of a function defined on a real interval is a continuous curve.

Theorem 6: Intermediate Value Theorem

If \(f\colon [a,b]\to \mathbb{R}\) is continuous and \(f(a) \lt s \lt f(b)\), then there is at least one \(c\in ]a,b[\) such that \(f(c)=s\).

The Intermediate Value Theorem.

Example 1.

Let function \(f:\mathbb{R} \to \mathbb{R}\), where \[f(x) = x^5 - 3x - 1.\] Show that there is at least one \(c \in \mathbb{R}\) such that \(f(c) = 0\).

Solution. As a polynomial function, \(f\) is continuous. And because \[f(1) = 1^5 - 3 \cdot 1 - 1 = -3 < 0\] and \[f(-1) = (-1)^5 - 3 \cdot (-1) - 1 = 1 > 0,\] by the Intermediate Value Theorem there is at least one \(c \in ]-1, 1[\) such that \(f(c) = 0\).

Function \(f(x) = x^5 - 3x - 1\).

Example 2.

Let \(f(x)=x^3-x=x(x^2-1)=x(x-1)(x-1)\).

By the Intermediate Value Theorem we have \(f(x)<0\) for \(x<-1\) or \(0 \lt x \lt 1\). Similarly, \(f(x)>0\) for \(-1 \lt x \lt 0\) or \(1 \lt x\), because:

- \(f(x)=0\) if and only if \(x=0\) or \(x=\pm 1\), and

- \(f(-2)<0, f(-1/2)>0, f(1/2)<0\) and \(f(2)>0\).

Function \(f(x) = x^3 - x\).

Next we prove that a continuous function defined on a closed real interval is necessarily bounded. For this result, it is important that the interval is closed. A counter example for an open interval is given after the next theorem.

Theorem 7.

Let \(f\colon [a,b]\to \mathbb{R}\) be continuous. Then \(f\) is bounded.

Note. If \(f\colon ]a,b[\to \mathbb{R}\) is continuous, it can be unbounded.

Example 4.

Let \(f\colon ]0,1]\to \mathbb{R}\), where \(f(x)=1/x\). Now \[\lim_{x\to 0+}f(x)=\infty.\]

Theorem 8.

Let \(f\colon [a,b]\to \mathbb{R}\) be continuous. Then there exist points \(c,d\in [a,b]\) such that \(f(c)\leq f(x)\leq f(d)\) for all \(x\in [a,b]\), i.e. \(f(c)\) is minimum and \(f(d)\) is maximum of \(f\) on the interval \([a,b]\).

Function \(f(x) = 1/x\) for \(x > 0\).

Example 5.

Let \(f:[-1,2] \to \mathbb{R}\), where \[f(x) = -x^3 - x + 3.\] The domain of the function is \([-1,2]\). To determine the range of the function, we first notice that the function is decreasing. We will now show this.

Let \(x_1 < x_2\). Then \[x_{1}^3 < x_{2}^3\] and \[-x_{1}^3 > -x_{2}^3.\]

Because \(x_1 < x_2\), \[-x_1^3-x_1 > -x_2^3 -x_2\] and \[-x_1^3-x_1 +3 > -x_2^3 -x_2 +3.\] Thus, if \(x_1 < x_2\) then \(f(x_1) > f(x_2)\), which means that the function \(f\) is decreasing.

We know that a decreasing function has its minimum value in the right endpoint of the interval. Thus, the minimum value of \(f:[-1,2] \to \mathbb{R}\) is \[f(2) = -2^3 - 2 + 3 = -7.\] Respectively, a decreasing function has it's maximum value in the left endpoint of the interval and so the maximum value of \(f:[-1,2] \to \mathbb{R}\) is \[f(-1) = -(-1)^3 - (-1) + 3 = 5.\]

As a polynomial function, \(f\) is continuous and it therefore has all the values between it's minimum and maximum values. Hence, the range of \(f\) is \([-7, 5]\).

Function \(-x^3 - x + 3\) for \([-1, 2]\).

Example 6.

Suppose that \(f\) is a polynomial. Then \(f\) is continuous on \(\mathbb{R}\) and, by Theorem 7, \(f\) is bounded on every closed interval \([a,b]\), \(a \lt b\). Furthermore, by Theorem 3, \(f\) must have minimum and maximum values on \([a,b]\).

Note. Theorem 8 is connected to the Intermediate Value Theorem in the following way:

If \(f\colon [a,b]\to \mathbb{R}\) be continuous, then there exist points \(x_1,x_2\in [a,b]\) such that \(f([a,b])=[f(x_1),f(x_2)]\).

4. Derivative

Derivative

The definition of the derivative of a function is given next. We start with an example illustrating the idea behind the formal definition.

Example 0.

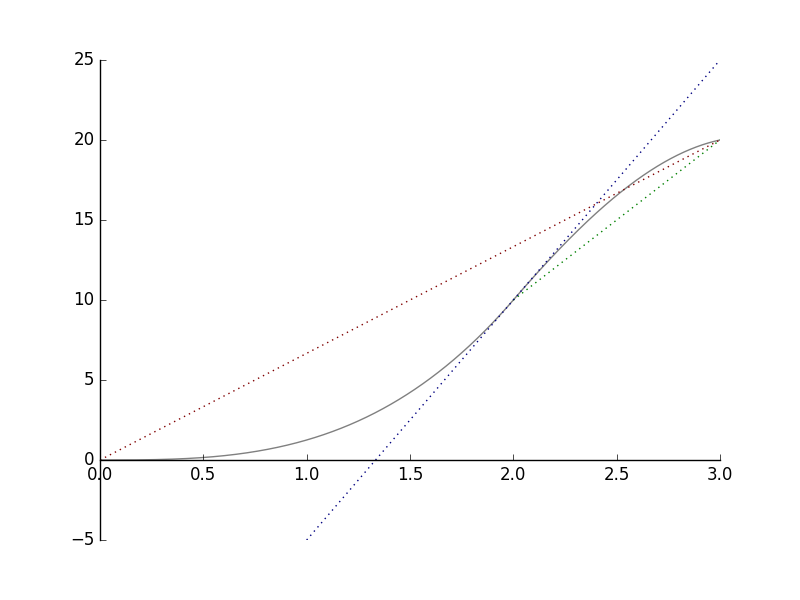

The graph below shows how far a cyclist gets from his starting point.

a) Look at the red line. We can see that in three hours, the cyclist moved \(20\)km. The average speed of the whole trip is \(6.6\) km/h.

b) Now look at the green line. We can see that during the third hour the cyclist moved \(10\)km further. That makes the average speed of that time interval \(10\) km/h.

Notice that the slope of the red line is \(20/3 \approx 6.6\) and that the slope of the blue line is \(10\). These are the same values as the corresponding average speeds.

c) Look at the blue line. It is the tangent of the curve at the point \(x=2h\). Using the same principle as with average speeds, we conclude that after two hours of the departure, the speed of the cyclist was \(30/2\) km/h \(= 15\) km/h.

Now we will proceed to the general definition:

Definition: Derivative

Let \((a,b)\subset \mathbb{R}\). The derivative of function \(f\colon (a,b)\to \mathbb{R}\) at the point \(x_0\in (a,b)\) is \[f'(x_0):=\lim_{h\to 0} \frac{f(x_0+h)-f(x_0)}{h}.\] If \(f'(x_0)\) exists, then \(f\) is said to be differentiable at the point \(x_0\).

Note: Since \(x = x_0+h\), then \(h=x-x_0\), and thus the definition can also be written in the form \[f'(x_0):=\lim_{x\to x_0} \frac{f(x)-f(x_0)}{x-x_0}.\]

The derivative can be denoted in different ways: \[ f'(x_0)=Df(x_0) =\left. \frac{df}{dx}\right|_{x=x_0}, \ \ f'=Df =\frac{df}{dx}. \]

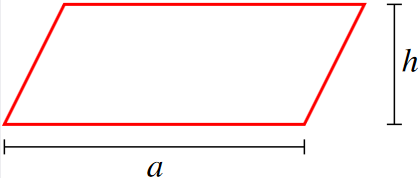

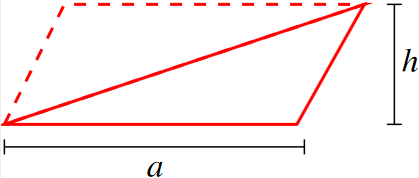

Interpretation. Consider the curve \(y = f(x)\). Now if we draw a line through the points \((x_0,f(x_0))\) and \((x_0+h, f(x_0+h))\), we see that the slope of this line is \[\frac{f(x_0+h)-f(x_0)}{x_0+h-x_0} = \frac{f(x_0+h)-f(x_0)}{h}.\] When \(h \to 0\), the line intersects with the curve \(y = f(x)\) only in the point \((x_0, f(x_0))\). This line is the tangent of the curve \(y=f(x)\) at the point \((x_0,f(x_0))\) and its slope is \[\lim_{h\to 0} \frac{f(x_0+h)-f(x_0)}{h},\] which is the derivative of the function \(f\) at \( x_0\). Hence, the tangent is given by the equation \[y=f(x_0)+f'(x_0)(x-x_0).\]

Interactivity. Move the point of intersection and observe changes on the tangent line of the curve.

Example 1.

Let \(f\colon \mathbb{R} \to \mathbb{R}\) be the function \(f(x) = x^3 + 1\). The derivative of \(f\) at \(x_0 = 1\) is \[\begin{aligned}f'(1) &=\lim_{h \to 0} \frac{f(1+h)-f(1)}{h} \\ &=\lim_{h \to 0} \frac{(1+h)^3 + 1 - 1^3 - 1}{h} \\ &=\lim_{h \to 0} \frac{1+3h+3h^2+h^3-1}{h} \\ &=\lim_{h \to 0} \frac{h(3+3h+h^2)}{h} \\ &=\lim_{h \to 0} 3+3h+h^2 \\ &= 3. \end{aligned}\]

Function \( x^3 + 1\) and its tangent at the point \(1\).

Example 2.

Let \(f\colon \mathbb{R} \to \mathbb{R}\) be the function \(f(x)=ax+b\). We find the derivative of \(f(x)\).

Immediately from the definition we get: \[\begin{aligned}f'(x) &=\lim_{h\to 0} \frac{f(x+h)-f(x)}{h} \\ &=\lim_{h\to 0} \frac{[a(x+h)+b]-[ax+b]}{h} \\ &=\lim_{h\to 0} a \\ &=a.\end{aligned}\]

Here \(a\) is the slope of the tangent line. Note that the derivative at \(x\) does not depend on \(x\) because \(y=ax+b\) is the equation of a line.

Note. When \(a=0\), we get \(f(x) = b\) and \(f'(x) = 0\). The derivative of a constant function is zero.

Example 3.

Let \(g\colon \mathbb{R} \to \mathbb{R}\) be the function \(g(x)=|x|\). Does \(g\) have a derivative at \(0\)?

Now \[g'(x_0)= \begin{cases}+1 & \text{when $x_{0}>0$} \\ -1 & \text{when $x_{0}<0$}\end{cases}\]

The graph \(y=g(x)\) has no tangent at the point \(x_0=0\): \[\frac{g(0+h)-g(0)}{h}= \frac{|0+h|-|0|}{h}=\frac{|h|}{h}=\begin{cases}+1 & \text{for $h>0$}, \\ -1 & \text{for $h<0$}.\end{cases}\] Thus \(g'(0)\) does not exist.

Conclusion. The function \(g\) is not differentiable at the point \(0\).

Remark. Let \(f\colon (a,b)\to \mathbb{R}\). If \(f'(x)\) exists for every \(x\in (a,b)\) then we get a function \(f'\colon (a,b)\to \mathbb{R}\). We write:

| (1) | \(f(x)\) | = \(f^{(0)}(x)\), | |

| (2) | \(f'(x)\) | = \(f^{(1)}(x)\) | = \(\frac{d}{dx}f(x)\), |

| (3) | \(f''(x)\) | = \(f^{(2)}(x)\) | = \(\frac{d^2}{dx^2}f(x)\), |

| (4) | \(f'''(x)\) | = \(f^{(3)}(x)\) | = \(\frac{d^3}{dx^3}f(x)\), |

| ... |

Here \(f''(x)\) is called the second derivative of \(f\) at \(x\), \(f^{(3)}\) is the third derivative, and so on.

We introduce the notation \begin{eqnarray} C^n\bigl( ]a,b[\bigr) =\{ f\colon \, ]a,b[\, \to \mathbb{R} & \mid & f \text{ is } n \text{ times differentiable on the interval } ]a,b[ \nonumber \\ & & \text{ and } f^{(n)} \text{ is continuous}\}. \nonumber \end{eqnarray} These functions are said to be n times continuously differentiable.

Function \(|x|\).

Example 4.

The distance moved by a cyclist (or a car) is given by \(s(t)\). Then the speed at the moment \(t\) is \(s'(t)\) and the acceleration is \(s''(t)\).

Linearization and differential

Properties of derivative

Next we give some useful properties of the derivative. These properties allow us to find derivatives for some familiar classes of functions such as polynomials and rational functions.

Continuity and derivative

If \(f\) is differentiable at the point \(x_0\), then \(f\) is continuous at the point \(x_0\): \[ \lim_{h\to 0} f(x_0+h) = f(x_0).\] Why? Because if \(f\) is differentiable, then we get \[f(x_0)+h\frac{f(x_0+h)-f(x_0)}{h} \rightarrow f(x_0)+0\cdot f'(x_0)=f(x_0),\] as \(h \to 0\).

Note. If a function is continuous at the point \(x_0\), it doesn't have to be differentiable at that point. For example, the function \(g(x) = |x|\) is continuous, but not differentiable at the point \(0\).

Differentiation Rules

Next we will give some important rules which are often applied in practical problems concerning determination of the derivative of a given function.

Suppose that \(f\) and \(g\) are differentiable at \(x\).

A Constant Multiplier

\[(cf)'(x) = cf'(x),\ c \in \mathbb{R}\]

Suppose that \(f\) is differentiable at \(x\). We determine: \[(cf)'(x),\] where \(c\in \mathbb{R}\) is a constant.

\[\begin{aligned}\frac{(cf)(x+h)-(cf)(x)}{h} \ & \ = \ \frac{cf(x+h)-cf(x)}{h} \\ & \ = \ c \ \frac{f(x+h)-f(x)}{h}\end{aligned}\]

As \(h\to 0\), we get \[c \ \frac{f(x+h)-f(x)}{h} \to c f'(x).\]

\(\square\)

The Sum Rule

\[(f+g)'(x) = f'(x) + g'(x)\]

Suppose that \(f\) and \(g\) are differentiable at \(x\). We determine \[(f+g)'(x).\]

By the definition: \[\begin{aligned}\frac{(f+g)(x+h)-(f+g)(x)}{h} \ & \ = \ \frac{[f(x+h)+g(x+h)]-[f(x)+g(x)]}{h} \\ & \ = \ \frac{f(x+h)-f(x)}{h}+\frac{g(x+h)-g(x)}{h}\end{aligned}\]

When \(h\to 0\), we get \[\frac{f(x+h)-f(x)}{h}+\frac{g(x+h)-g(x)}{h}\to \ f'(x)+g'(x)\]

\(\square\)

The Product Rule

\[(fg)'(x) = f'(x)g(x) + f(x)g'(x)\]

Suppose that \(f,g\) and are differentiable at \(x\). We determine \[(fg)'(x).\] \[\begin{aligned}\frac{(fg)(x+h)-(fg)(x)}{h} & = \frac{f(x+h)g(x+h)-f(x)g(x)}{h} \\ & = \frac{f(x+h)g(x+h)-f(x)g(x+h)+f(x)g(x+h)-f(x)g(x)}{h} \\ & = \frac{f(x+h)-f(x)}{h}\ g(x+h)+f(x)\ \frac{g(x+h)-g(x)}{h}\end{aligned}\]

When \(h\to 0\), we get \[\frac{f(x+h)-f(x)}{h}g(x+h)+f(x)\frac{g(x+h)-g(x)}{h}\to f'(x)g(x)+f(x)g'(x).\]

\(\square\)

The Power Rule

\[\frac{d}{dx} x^n = nx^{n-1} \text{, } n \in \mathbb{Z}\]

For \( n\ge 1\) we repeteadly apply the product rule, and obtain \[\begin{aligned}\frac{d}{dx}x^n \ & = \frac{d}{dx}(x\cdot x^{n-1}) \\ & = (\frac{d}{dx}x)x^{n-1}+x\frac{d}{dx}x^{n-1} \\ & \stackrel{dx/dx=1}{=} x^{n-1}+x\frac{d}{dx}x^{n-1} \\ & = x^{n-1}+x\left( x^{n-2}+x\frac{d}{dx}x^{n-2}\right) \\ & = \ldots \\ & = \sum_{k=0}^{n-1} x^{n-1} \\ & = nx^{n-1}.\end{aligned}\]

The case of negative \( n\) is obtained from this and the product rule applied to the identity \( x^n \cdot x^{-n} = 1\).

From the power rule we obtain a formula for the derivative of a polynomial. Let \[P(x)=a_n x^{n}+a_{n-1}x^{n-1}+\ldots+ a_1 x + a_0,\] where \(n\in \mathbb{N}\). Then \[\frac{d}{dx}P(x)=na_nx^{n-1}+(n-1)a_{n-1}x^{n-2}+\ldots +2 a_2 x+a_1.\]

\(\square\)

The Reciprocal Rule

\[\Big(\frac{1}{f}\Big)'(x) = - \frac{f'(x)}{f(x)^2} \text{, } f(x) \neq 0\]

Suppose that \(f\) is differentiable at \(x\) and \(f(x)\neq 0\). We determine \[(\frac{1}{f})'(x).\]

From the definition we obtain: \[\begin{aligned}\frac{(1/f)(x+h)-(1/f)(x)}{h} & = \frac{1/f(x+h)-1/f(x)}{h} \\ & = \frac{\frac{f(x)}{f(x)f(x+h)}-\frac{f(x+h)}{f(x)f(x+h)}}{h} \\ & = \frac{f(x)-f(x+h)}{h}\frac{1}{f(x)f(x+h)}\end{aligned}\]

Because \(f\) is differentiable at \(x\) we get \[\frac{f(x)-f(x+h)}{h}\frac{1}{f(x)f(x+h)}=-f'(x)/f(x)^2,\] as \(h\to 0\).

\(\square\)

The Quotient Rule

\[(f/g)'(x) = \frac{f'(x)g(x)-f(x)g'(x)}{g(x)^2},\ g(x) \neq 0\]

Suppose that \(f,g\) are differentiable at \(x\) and \(g(x)\neq 0\). Then \[\begin{aligned}(f/g)'(x) & = \Big( f \cdot \frac{1}{g}\Big) '(x) \\ & = f'(x)\frac{1}{g(x)}-f(x)\frac{g'(x)}{g(x)^2} \\ & = \frac{f'(x)g(x)-f(x)g'(x)}{g(x)^2}.\end{aligned}\]

\(\square\)

Interactivity. Vary \(x\) and the constant multiplier and see the effect of constant multiplier rule in practice.

Example 1.

\[\frac{d}{dx}(x^{2006}+5x^3+42)=\frac{d}{dx}x^{2006}+5\frac{d}{dx}x^3+42\frac{d}{dx}1=2006x^{2005}+5\cdot 3x^2.\]

Example 2.

\[\begin{aligned}\frac{d}{dx} [(x^4-2)(2x+1)] &= \frac{d}{dx}(x^4-2) \cdot (2x+1) + (x^4-2) \cdot \frac{d}{dx}(2x + 1) \\ &= 4x^3(2x+1) + 2(x^4-2) \\ &= 8x^4+4x^3+2x^4-4 \\ &= 10x^4+4x^3-4.\end{aligned}\]

Note. We can check the answer by deriving it in another way: \[\frac{d}{dx} [(x^4-2)(2x+1)] = \frac{d}{dx} (2x^5 +x^4 -4x -2) = 10x^4 +4x^3 -4.\]

Function \( (x^4-2)(2x+1) \).

Example 3.

For \(x \neq 0\) we get \[\frac{d}{dx} \frac{3}{x^3} = 3 \cdot \frac{d}{dx} \frac{1}{x^3} = -3 \cdot \frac{\frac{d}{dx} x^3}{(x^3)^2} = -3 \cdot \frac{3x^2}{x^6}= - \frac{9}{x^4}.\]

Note. There is another way of solving the problem above by noticing that \(\frac{1}{x^3} = x^{-3}\) and differentiating it as a power: \[\frac{d}{dx} \ \frac{3}{x^3} = 3 \cdot \frac{d}{dx} x^{-3} = 3 \cdot (-3x^{-4})= - \frac{9}{x^4}\]

Example 4.

\[\begin{aligned}\frac{d}{dx} \frac{x^3}{1+x^2} & = \frac{(\frac{d}{dx}x^3)(1+x^2)-x^3\frac{d}{dx}(1+x^2)}{(1+x^2)^2} \\ & = \frac{3x^2(1+x^2)-x^3(2x)}{(1+x^2)^2} \\ & = \frac{3x^2+x^4}{(1+x^2)^2}.\end{aligned}\]

Function \(x^3 / (1+x^2)\).

Rolle's Theorem

If \(f\) is differentiable at a local extremum \(x_0\in \, ]a,b[\), then \(f'(x_0)=0\).

The one-sided limits of the difference quotient have different signs at a local extremum. For example, for a local maximum it holds that \begin{eqnarray} \frac{f(x_0+h)-f(x_0)}{h} = \frac{\text{negative} }{\text{positive}}&\le& 0, \text{ when } h>0, \nonumber \\ \frac{f(x_0+h)-f(x_0)}{h} = \frac{\text{negative}}{\text{negative}}&\ge& 0, \text{ when } h<0 \nonumber \end{eqnarray} and \(|h|\) is so small that \(f(x_0)\) is a maximum on the interval \([x_0-h,x_0+h]\).

L'Hospital's Rule

There are many different versions of this rule, but we present only the simplest one. Let us assume that \(f(x_0)=g(x_0)=0\) and the functions \(f,g\) are differentiable on some interval \(]x_0-\delta,x_0+\delta[\). If \[ \lim_{x\to x_0}\frac{f'(x)}{g'(x)} \] exists, then \[ \lim_{x\to x_0}\frac{f(x)}{g(x)}=\lim_{x\to x_0}\frac{f'(x)}{g'(x)}. \]

In the special case \(g'(x_0)\neq 0\) the proof is simple: \[ \frac{f(x)}{g(x)}=\frac{f(x)-f(x_0)}{g(x)-g(x_0)} = \frac{\bigl( f(x)-f(x_0)\bigr) /(x-x_0)}{\bigl( g(x)-g(x_0)\bigr) /(x-x_0)} \to \frac{f'(x_0)}{g'(x_0)}. \] In the general case we need the so-called generalized mean value theorem, which states that \[ \frac{f(x)}{g(x)} = \frac{f'(c)}{g'(c)} \] for some \(c\in \, ]x_0,x[\). Here we have the same point \(c\) both in the numerator and the denominator, so we do not even need the continuity of the derivatives!

Derivatives of Trigonometric Functions

In this section, we give differentiation formulas for trigonometric functions \(\sin\), \(\cos\) and \(\tan\).

Derivative of Sine

\[\sin'(t)=\cos(t)\]

Function \(\sin(x)\) and its derivative function \(\cos(x)\).

Derivative of Cosine

\[\cos'(t)= - \sin(t)\]

This follows in a similar way as the derivative of Sine, but more easily from the identity \(\cos(t)=\sin(\pi/2-t)\) and the Chain rule to be introduced in the following section.

\(\square\)

Function \(\cos(x)\) and its derivative function \(-\sin(x)\).

Derivative of Tangent

\[\tan'(t) = \frac{1}{\cos^2(t)}=1+\tan^2 t.\]

Because \[\tan(t)=\frac{\sin(t)}{\cos(t)},\] from the quotient rule we obtain \[\tan'(t)=\frac{\sin'(t)\cos(t)-\sin(t)\cos'(t)}{\cos^2(t)}=\frac{\cos^2(t)+\sin^2(t)}{\cos^2(t)}=\begin{cases}\frac{1}{\cos^2(t)} & \\ 1+\tan^2 t.\end{cases}\]

\(\square\)

Function \(\tan(x)\) and its derivative function \(1/\cos^2(x)\).

Example 1.

\[\frac{d}{dx} (3 \sin(x)) = 3 \sin'(x) = 3 \cos(x).\]

Example 2.

\[\frac{d}{dx} \cos^2 (x) = \cos'(x) \cdot \cos(x) + \cos(x) \cdot \cos'(x) = -2\sin(x)\cos(x).\]

Example 3.

\[\begin{aligned} \frac{d}{dx} \frac{\sin(x) + 1}{\cos(x)} &= \frac{d}{dx} \left( \frac{\sin(x)}{\cos(x)} + \frac{1}{\cos(x)} \right) \\ &= \tan'(x) - \frac{\cos'(x)}{\cos^2(x)} \\ &= \frac{1+\sin(x)}{\cos^2 (x)}.\end{aligned}\]

The Chain Rule

In this section we learn a formula for finding the derivative of a composite function. This important formula is known as the Chain Rule.

The Chain Rule.

Let \(f\colon \mathbb{R}\to \mathbb{R}\), \(g\colon \mathbb{R}\to \mathbb{R}\) and \(f \circ g \colon \mathbb{R}\to \mathbb{R}\).

Let \(g\) be differentiable at the point \(x\) and \(f\) at \(g(x)\). Then

\[\frac{d}{dx}f(g(x))=f'(g(x))g'(x).\]

Consider

\[\begin{aligned}\frac{f(g(x+h))-f(g(x))}{h} &= \frac{f(g(x+h))-f(g(x))}{h} \ \frac{g(x+h)-g(x)}{g(x+h)-g(x)} \\ &= \frac{f(g(x+h))-f(g(x))}{g(x+h)-g(x)} \ \frac{g(x+h)-g(x)}{h}.\end{aligned}\]

Now let us write \(k(h):=g(x+h)-g(x)\). Then \(g(x+h)=g(x)+k(h)\) and we get \[\frac{f(g(x+h))-f(g(x))}{h}=\frac{f(g(x)+k(h))-f(g(x))}{k(h)}\frac{g(x+h)-g(x)}{h}.\]

Problem. What if \(k(h)=0\)? Note that one cannot divide by zero.

Solution. Define \[E(k):= \begin{cases}0, & \text{for $k=0$}, \\ \frac{f(g(x)+k)-f(g(x))}{k}-f'(g(x)), & \text{for $k\neq 0$},\end{cases}\] so that \[\frac{f(g(x+h))-f(g(x))}{h}=[E(k(h))+f'(g(x))]\frac{g(x+h)-g(x)}{h}.\] Now, because \(E\) is continuous, we get \[[E(k(h))+f'(g(x))]\frac{g(x+h)-g(x)}{h}\to f'(g(x))g'(x).\] as \(h\to 0\).

\(\square\)

Example 1.

The problem is to differentiate the function \((2x-1)^3\). We take \(f(x) = x^3\) and \(g(x) = 2x-1\) and differentiate the composite function \(f(g(x))\). As \[f'(x) = 3x^2 \text{ and } g'(x) = 2,\] we get \[\frac{d}{dx} (2x-1)^3 = 3(2x-1)^2 \cdot 2 = 6(4x^2-4x+1) = 24x^2-24x+6.\]

Function \((2x-1)^3\) and its derivative function.

Example 2.

We need to differentiate the function \(\sin 3x\). Take \(f(x) = \sin x\) and \(g(x) = 3x\), then differentiate the composite function \(f(g(x))\). \[\frac{d}{dx} \sin 3x = \cos 3x \cdot 3 = 3 \cos 3x.\]

Remark. Let \(h\colon \mathbb{R}\to \mathbb{R}, g\colon \mathbb{R}\to \mathbb{R}\) and \(f\colon \mathbb{R}\to \mathbb{R}\). Now \[\frac{d}{dx}f(g(h(x)))=f'(g(h(x)))\frac{d}{dx}g(h(x))=f'(g(h(x)))g'(h(x))h'(x).\] Similarly, one may obtain even more complex rules for composites of multiple functions.

Function \(\sin 3x\) and its derivative function.

Example 3.

Differentiate the function \(\cos^3 2x\). Take \(f(x) = x^3\), \(g(x) = \cos x\) and \(h(x) = 2x\) and differentiate the composite function \(f(g(h(x)))\). \[\begin{aligned}\frac{d}{dx} \cos^3 2x &= 3(\cos 2x)^2 \cdot \frac{d}{dx} \cos 2x \\ &= 3 \cos^2 2x \cdot (-\sin 2x) \cdot 2 \\ &= -6 \sin 2x \cos^2 2x.\end{aligned}\]

Function \(\cos^3 2x\) and its derivative function.

Extremal Value Problems

We will discuss the Intermediate Value Theorem for differentiable functions, and its connections to extremal value problems.

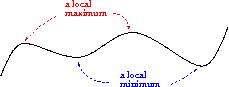

Definition: Local Maxima and Minima

A function \(f\colon A\to \mathbb{R}\) has a a local maximum at the point \(x_0\in A\), if for some \(h\gt 0\) and for all \(x\in A\) such that \(|x-x_0|\lt h\), we have \(f(x)\leq f(x_0)\).

Similarly, a function \(f\colon A\to \mathbb{R}\) has a local minimum at the point \(x_0\in A\) , if for some \(h>0\) and for all \(x\in A\) such that \(|x-x_0|\lt h\), we have \(f(x)\geq f(x_0)\).

A local extreme is a local maximum or a local minimum.

Remark. If \(x_0\) is a local maximum value and \(f'(x_0)\) exists, then \[\begin{cases}f'(x_0) & =\lim_{h\to 0^{+}}\frac{f(x_0+h)-f(x_0)}{h} \leq 0 \\ f'(x_0) & =\lim_{h\to 0^{-}}\frac{f(x_0+h)-f(x_0)}{h} \geq 0.\end{cases}\] Hence \(f'(x_0)=0\).

We get:

Theorem 1.

Let \(x_0\in [a,b]\) be a local extremal value of a continuous function \(f\colon [a,b]\to \mathbb{R}\). Then either

the derivative \(f'(x_0)\) doesn't exist (this includes also cases \(x_0=a\) and \(x_0=b\)) or

\(f'(x_0)=0\).

Example 1.

Let \(f: \mathbb{R} \to \mathbb{R}\) be defined by \[f(x) = x^3 -3x + 1.\] Then \[f'(x) = 3x^2-3\] and we can see that at the points \(x_0 = -1\) and \(x_0 = 1\) the local maximum and minimum of \(f\) are obtained, \[f'(-1) = 3 \cdot (-1)^2 - 3 = 0 \text{ and } f'(1) = 3 \cdot 1^2 - 3 = 0.\]

Function \(x^3-3x+1\) and its derivative function \(3x^2-3\).

Finding the global extrema

In practice, when we are looking for the local extrema of a given function, we need to check three kinds of points:

the zeros of the derivative

the endpoints of the domain of definition (interval)

points where the function is not differentiable

If we happened to know beforehand that the function has a minimum/maximum, then we start off by finding all the possible local extrema (the points described above), evaluate the function at these points and pick the greatest/smallest of these values.

Example 2.

Let us find the smallest and greatest value of the function \(f\colon [0,2]\to \mathbf{R}\), \(f(x)=x^3-6x\). Since the function is continuous on a closed interval, then it has a maximum and a minimum. Since the function is differentiable, it is sufficient to examine the endpoints of the interval and the zeros of the derivative that are contained in the interval.

The zeros of the derivative: \(f'(x)=3x^2-6=0 \Leftrightarrow x=\pm \sqrt{2}\). Since \(-\sqrt{2}\not\in [0,2]\), we only need to evaluate the function at three points, \(f(0)=0\), \(f(\sqrt{2})=-4\sqrt{2}\) and \(f(2)=-4\). From these we can see that the smallest value of the function is \(-4\sqrt{2}\) and the greatest value is \(0\), respectively.

Next we will formulate a fundamental result for differentiable functions. The basic idea here is that the change on an interval can only happen, if there is change at some point on the inverval.

Theorem 2.

(The Intermediate Value Theorem for Differentiable Functions). Let \(f\colon [a,b]\to \mathbb{R}\) be continuous in the interval \([a,b]\) and differentiable in the interval \((a,b)\). Then \[f'(x_0)=\frac{f(b)-f(a)}{b-a}\] for some \(x_0\in (a,b).\)

Let \(f\) be continuous in the interval \([a,b]\) and differentiable in the interval \((a,b)\). Let us define \[g(x):=f(x)-\frac{f(b)-f(a)}{b-a}(x-a)-f(a).\]

Now \(g(a)=g(b)=0\) and \(g\) is differentiable in the interval \((a,b)\). According to Rolle's Theorem, there exists \(c\in(a,b)\) such that \(g'(c)=0\). Hence \[f'(c)=g'(c)+\frac{f(b)-f(a)}{b-a}=\frac{f(b)-f(a)}{b-a}.\]

\(\square\)

This result has an important application:

Theorem 3.

Let \(f\colon (a,b)\to \mathbb{R}\) be a differentiable function. Then

If for all \(x\in (a,b) \ \ f'(x)\geq 0\), then \(f\) is increasing,

If for all \(x\in (a,b) \ \ f'(x)\leq 0\), then \(f\) is decreasing.

Suppose that \(a \lt x_1 \lt x_2 \lt b\).

Then by Theorem 2 there exists \(x_0\in (x_1,x_2)\) such that \[f'(x_0)=\frac{f(x_2)-f(x_1)}{x_2-x_1}.\]

It follows that \(f(x_2)-f(x_1)=f'(x_0)(x_2-x_1)\).

Hence we may conclude that \(f\) is increasing for \(f'(x_0)\geq 0\) and decreasing for \(f'(x_0)\leq 0\).

Example 3.

For the polynomial \(f(x) = \frac{1}{4} x^4-2x^2-7\) the derivative is \[f'(x) = x^3-4x = x(x^2-4) = 0,\] when \(x=0\), \(x=2\) or \(x=-2\). Now we can draw a table:

| \(x<-2\) | \(-2 \lt x \lt 0\) | \(0 \lt x \lt 2\) | \(x>2\) | |

|---|---|---|---|---|

| \(x\) | \(<0\) | \(<0\) | \(>0\) | \(>0\) |

| \(x^2-4\) | \(>0\) | \(<0\) | \(<0\) | \(>0\) |

| \(f'(x)\) | \(<0\) | \(>0\) | \(<0\) | \(>0\) |

| \(f(x)\) | decr. | incr. | decr. | incr. |

Function \(\frac{1}{4} x^4-2x^2-7\).

Example 4.

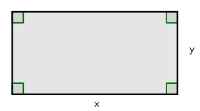

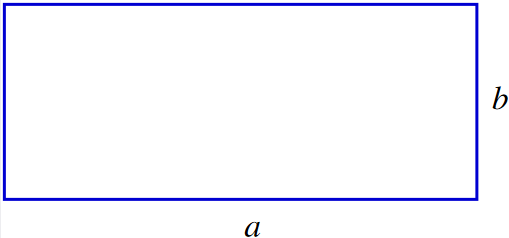

We need to find a rectangle so that its area is \(9\) and it has the least possible perimeter.

Let \(x\ (>0)\) and \(y\ (>0)\) be the sides of the rectangle. Then \(x \cdot y = 9\) and we get \(y=\frac{9}{x}\). Now the perimeter is \[2x+2y = 2x+2 \frac{9}{x} = \frac{2x^2+18}{x}.\] In which point does the function \(f(x) = \frac{2x^2+18}{x}\) get its minimum value? Function \(f\) is continuous and differentiable, when \(x>0\) and using the quotient rule, we get \[f'(x) = \frac{4x \cdot x-(2x^2+18) \cdot 1}{x^2} = \frac{2x^2-18}{x^2}.\] Now \(f'(x) = 0\), when \[\begin{aligned}2x^2-18 &= 0 \\ 2x^2 &= 18 \\ x^2 &= 9 \\ x &= \pm 3\end{aligned}\] but we have defined that \(x>0\) and therefore are only interested in the case \(x=3\). Let's draw a table:

| \(x<3\) | \(x>3\) | |

|---|---|---|

| \(f'(x)\) | \(<0\) | \(>0\) |

| \(f(x)\) | decr. | incr. |

As the function \(f\) is continuous, we now know that it attains its minimum at the point \(x=3\). Now we calculate the other side of the rectangle: \(y=\frac{9}{x}=\frac{9}{3}=3\).

Thus, the rectangle, which has the least possible perimeter is actually a square, which sides are of the length \(3\).

Function \(\frac{2x^2+18}{x}\).

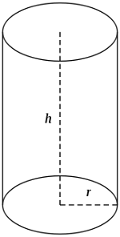

Example 5.

We must make a one litre measure, which is shaped as a right circular cylinder without a lid. The problem is to find the best size of the bottom and the height so that we need the least possible amount of material to make the measure.

Let \(r > 0\) be the radius and \(h > 0\) the height of the cylinder. The volume of the cylinder is \(1\) dm\(^3\) and we can write \(\pi r^2 h = 1\) from which we get \[h = \frac{1}{\pi r^2}.\]

The amount of material needed is the surface area \[A_{\text{bottom}} + A_{\text{side}} = \pi r^2 + 2 \pi r h = \pi r^2 + \frac{2 \pi r}{\pi r^2} = \pi r^2 + \frac{2}{r}.\]

Let function \(f: (0, \infty) \to \mathbb{R}\) be defined by \[f(r) = \pi r^2 + \frac{2}{r}.\] We must find the minimum value for function \(f\), which is continuous and differentiable, when \(r>0\). Using the reciprocal rule, we get \[f'(r) = 2\pi r -2 \cdot \frac{1}{r^2} = \frac{2\pi r^3 - 2}{r^2}.\] Now \(f'(r) = 0\), when \[\begin{aligned}2\pi r^3 - 2 &= 0 \\ 2\pi r^3 &= 2 \\ r^3 &= \frac{1}{\pi} \\ r &= \frac{1}{\sqrt[3]{\pi}}.\end{aligned}\]

Let's draw a table:

| \(r<\frac{1}{\sqrt[3]{\pi}}\) | \(r>\frac{1}{\sqrt[3]{\pi}}\) | |

|---|---|---|

| \(f'(r)\) | \(<0\) | \(>0\) |

| \(f(r)\) | decr. | incr. |

As the function \(f\) is continuous, we now know that it gets its minimum value at the point \(r= \frac{1}{\sqrt[3]{\pi}} \approx 0.683\). Then \[h = \frac{1}{\pi r^2} = \frac{1}{\pi \left(\frac{1}{\sqrt[3]{\pi}}\right)^2} = \frac{1}{\frac{\pi}{\pi^{2/3}}} = \frac{1}{\sqrt[3]{\pi}} \approx 0.683.\]

This means that it would take least materials to make a measure, which is approximately \(2 \cdot 0.683\) dm \( = 1.366\) dm \( \approx 13.7\) cm in diameter and \(0.683\) dm \( \approx 6.8\) cm high.

Function \(\pi r^2 + \frac{2}{r}\).

5. Taylor polynomial

Taylor polynomial

Example

Compare the graph of \(\sin x\) (red) with the graphs of the polynomials \[ x-\frac{x^3}{3!}+\frac{x^5}{5!}-\dots + \frac{(-1)^nx^{2n+1}}{(2n+1)!} \] (blue) for \(n=1,2,3,\dots,12\).

\(\displaystyle\sum_{k=0}^{n}\frac{(-1)^{k}x^{2k+1}}{(2k+1)!}\)

Definition: Taylor polynomial

Let \(f\) be \(k\) times differentiable at the point \(x_{0}\). Then the Taylor polynomial \begin{align} P_n(x)&=P_n(x;x_0)\\\ &=f(x_0)+f'(x_0)(x-x_0)+\frac{f''(x_0)}{2!}(x-x_0)^2+ \\ & \dots +\frac{f^{(n)}(x_0)}{n!}(x-x_0)^n\\ &=\sum_{k=0}^n\frac{f^{(k)}(x_0)}{k!}(x-x_0)^k\\ \end{align} is the best polynomial approximation of degree \(n\) (with respect to the derivative) for a function \(f\), close to the point \(x_0\).

Note. The special case \(x_0=0\) is often called the Maclaurin polynomial.

If \(f\) is \(n\) times differentiable at \(x_0\), then the Taylor polynomial has the same derivatives at \(x_0\) as the function \(f\), up to the order \(n\) (of the derivative).

The reason (case \(x_0=0\)): Let \[ P_n(x)=c_0+c_1x+c_2x^2+c_3x^3+\dots +c_nx^n, \] so that \begin{align} P_n'(x)&=c_1+2c_2x+3c_3x^2+\dots +nc_nx^{n-1}, \\ P_n''(x)&=2c_2+3\cdot 2 c_3x\dots +n(n-1)c_nx^{n-2} \\ P_n'''(x)&=3\cdot 2 c_3\dots +n(n-1)(n-2)c_nx^{n-3} \\ \dots && \\ P^{(k)}(x)&=k!c_k + x\text{ terms} \\ \dots & \\ P^{(n)}(x)&=n!c_n \\ P^{(n+1)}(x)&=0. \end{align}

From these way we obtain the coefficients one by one: \begin{align} c_0= P_n(0)=f(0) &\Rightarrow c_0=f(0) \\ c_1=P_n'(0)=f'(0) &\Rightarrow c_1=f'(0) \\ 2c_2=P_n''(0)=f''(0) &\Rightarrow c_2=\frac{1}{2}f''(0) \\ \vdots & \\ k!c_k=P_n^{(k)}(0)=f^{(k)}(0) &\Rightarrow c_k=\frac{1}{k!}f^{(k)}(0). \\ \vdots &\\ n!c_n=P_n^{(n)}(0)=f^{(n)}(0) &\Rightarrow c_k=\frac{1}{n!}f^{(n)}(0). \end{align} Starting from index \(k=n+1\) we cannot pose any new conditions, since \(P^{(n+1)}(x)=0\).

Taylor's Formula

If the derivative \(f^{(n+1)}\) exists and is continuous on some interval \(I=\, ]x_0-\delta,x_0+\delta[\), then \(f(x)=P_n(x;x_0)+E_n(x)\) and the error term \(E_n(x)\) satisfies \[ E_n(x)=\frac{f^{(n+1)}(c)}{(n+1)!}(x-x_0)^{n+1} \] at some point \(c\in [x_0,x]\subset I\). If there is a constant \(M\) (independent of \(n\)) such that \(|f^{(n+1)}(x)|\le M\) for all \(x\in I\), then \[ |E_n(x)|\le \frac{M}{(n+1)!}|x-x_0|^{n+1} \to 0 \] as \(n\to\infty\).

\neq omitted here (mathematical induction or integral).

Examples of Maclaurin polynomial approximations: \begin{align} \frac{1}{1-x} &\approx 1+x+x^2+\dots +x^n =\sum_{k=0}^{n}x^k\\ e^x&\approx 1+x+\frac{1}{2!}x^2+\frac{1}{3!}x^3+\dots + \frac{1}{n!}x^n =\sum_{k=0}^{n}\frac{x^k}{k!}\\ \ln (1+x)&\approx x-\frac{1}{2}x^2+\frac{1}{3}x^3-\dots + \frac{(-1)^{n-1}}{n}x^n =\sum_{k=1}^{n}\frac{(-1)^{k-1}}{k}x^k\\ \sin x &\approx x-\frac{1}{3!}x^3+\frac{1}{5!}x^5-\dots +\frac{(-1)^n}{(2n+1)!}x^{2n+1} =\sum_{k=0}^{n}\frac{(-1)^k}{(2k+1)!}x^{2k+1}\\ \cos x &\approx 1-\frac{1}{2!}x^2+\frac{1}{4!}x^4-\dots +\frac{(-1)^n}{(2n)!}x^{2n} =\sum_{k=0}^{n}\frac{(-1)^k}{(2k)!}x^{2k} \end{align}

Example

Which polynomial \(P_n(x)\) approximates the function \(\sin x\) in the interval \([-\pi,\pi]\) so that the absolute value of the error is less than \(10^{-6}\)?

We use Taylor's Formula for \(f(x)=\sin x\) at \(x_0=0\). Then \(|f^{(n+1)}(c)|\le 1\) independently of \(n\) and the point \(c\). Also, in the interval in question, we have \(|x-x_0|=|x|\le \pi\). The requirement will be satisfied (at least) if \[ |E_n(x)|\le \frac{1}{(n+1)!}\pi^{n+1} < 10^{-6}. \] This inequality must be solved by trying different values of \(n\); it is true for \(n\ge 16\).

The required approximation is achieved with \(P_{16}(x)\), which fo sine is the same as \(P_{15}(x)\).

Check from graphs: \(P_{13}(x)\) is not enough, so the theoretical bound is sharp!

Taylor polynomial and extreme values